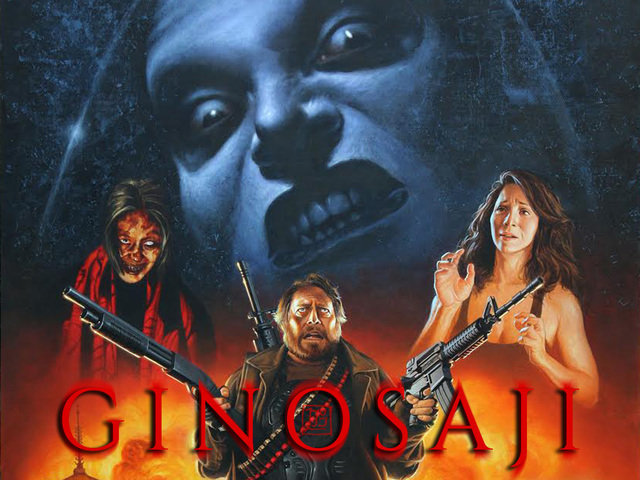

Update + Motion Capture Test / MetaHumans

almost 5 years ago

– Wed, Jun 30, 2021 at 07:43:51 AM

Hello Friends of the Spoon,

I hope all of you are doing well and staying healthy.

The Ginosaji project remains on hold as I continue to care for my elderly Mother, who is suffering from serious health issues. I've been her full-time caregiver for more than two years now, since my Dad passed away in early 2019. They were married for 55 years.

She had a terrible fall last August, which resulted in surgery and a week in the hospital. This made an already difficult situation more complicated due to severe disabilities - she's unable to walk, and cannot take care of herself without assistance. The pandemic greatly complicated things, with the threat of COVID putting her at serious risk. But in one way it's simple: her life and well-being are my top priorities.

This has been the most challenging period of my life, but I am glad that I am able to help her. If it wasn't for her, the Horribly Slow Murderer films would never have existed in the first place, and neither would I. I am grateful for the messages of support many of you have sent me.

I have resisted posting an update these many months since we had no positive progress to report on the project, aside from the fact that I am surviving and have been managing an insanely difficult situation. We still fully intend to produce Ginosaji when it is possible, no matter what.

I apologize for the lack of updates. I understand it may have led some of you to think that the project is never going to get made. But that is not accurate.

The project is still on hold, but make no mistake - the Ginosaji project is alive and ultimately, it is going to be awesome.

Motion Capture Tests and MetaHumans

In bits of free time, I'm learning how to do Motion Capture, and have been testing new software which creates digital people who appear incredibly lifelike. It's called MetaHuman Creator, part of Unreal Engine (the same technology powering the virtual sets in The Mandalorian, and video games like Bioshock Infinite and Fortnite). Real actors provide the performance, which is motion captured, and applied to the MetaHuman. This is groundbreaking stuff.

See their trailer plus my first test below:

https://www.youtube.com/watch?v=t3FFmc6xaos

My first MetaHuman MoCap test:

https://www.youtube.com/watch?v=PbDuO7FMYF8

How It Works

I'm using an iPhone to Motion capture my face (which tracks every muscle in my face, plus my eyes and head position), and sends tracking data in realtime to control the MetaHuman's face and head in Unreal Engine. Some of the lip movements are not there yet, but for the Alpha version, I'm impressed by how natural it looks.

I was accepted into their early access program to test it out. When the full, improved version releases later this year, I think it could open up exciting new opportunities for films and video games.

I wish I had more progress to report. But that's it for now.

Sticking with this project requires a massive amount of patience and understanding on your part, plus the tenacity of a Ginosaji. I am grateful for all of you who have remained positive and supportive since the beginning. It is still our plan to deliver a lot more than was originally promised, and we will.

Richard